The FAST Test… It didn’t live up to its name

Posted

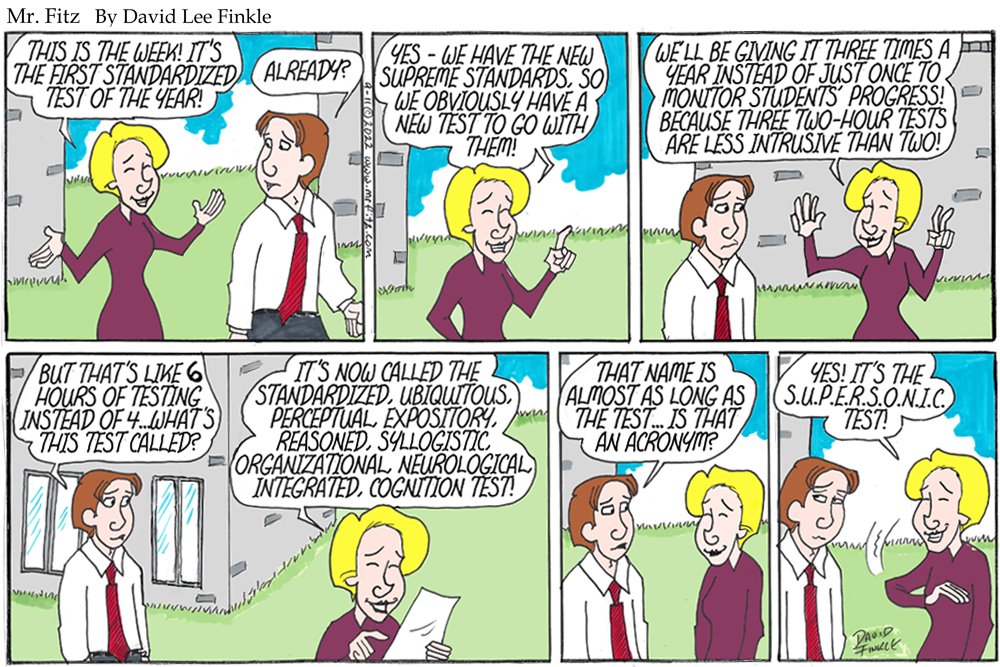

I ran this strip today in the wake of having administered Florida’s new standardized literacy test on Thursday, September 8th. The new test is actually the “FAST” test (Florida Assessment of Student Thinking). It’s also being called Progress Monitoring.

When the FSA (Florida Standards Assessment) went away, there was great rejoicing. Those of who knew testing would be back, like a deadly slasher in some terrible horror movie franchise, said “Wait a bit.”

Sure enough, like Jason and Freddy Krueger and Michael Myers, the test is back. Yes, it has a new name. Yes, it has a new gimmick. The two go hand in hand. The FAST test was supposed to be a speedy, fleet checkup of where students were literacy-wise, and we would take it 3 times a year instead of just once near the end of the year, so that we could monitor their progress.

Here’s how it actually went down.

First, it is not fast. The script I read claims students only have an hour to take it. But we were told that the state said to give extra time so students could finish. So it went over an hour – it took up most of the morning. This will happen twice more this year. So we’re actually looking at about 6 or more hours of testing spread throughout the year rather than just 4 hours over two days. That might not sound like much of a difference, but when you want to teach and you typically lose about 25 to 30 instructional days to testing each year, any additional assessment days are very, very frustrating.

As usual, I my job in my testing room was to make sure that student were actually taking the test, which required looking at their screens, but without actually reading the test or seeing any of the texts or questions. Fortunately, my eyes are aging, which makes this task easier. But from what I could see, the test was in the exact same format as the old test (a split screen with texts to read on the right and questions on the right), and the same exact platform. To that extent it was the same old test.

The great innovation of FAST testing is supposed to be its “progress monitoring.” Kids take it three times a year and we’re supposed to get feedback on their performance fast so that we can “use data to inform instruction.” In theory this sounds like it might be good. Here’s the reality: Yes, we got data back by the next day, but it was in the form of an enormous Excel chart that had student names all the way to the left, a lot of other information about age, gender, student code, etcetera in the middle, and then their level of proficiency all the way to right, so distant from their names that it’s hard to match names to performance levels.

The level of proficiency is basically a numerical ranking. Level 5 is something like “performing above level”. Level 3 is “At level” and Level 1 is “Far below level.”

This is not useful data. Even this early in the school year, we as teachers already have a pretty good idea of who’s operating at what level as readers and writers. What we need, if we’re going to improve these scores, is something that will tell us which standards the students fell down on – and sample questions that show us how the students are questioned. In the past I learned what level students were at right at the end of the school year or over the summer, when it was too late to teach them any more. I thought this might actually be helpful – but being told a student is a Level 1 with no specifics about why and what skills they are struggling with is not actionable. What do I do? Teach harder? I’m pretty much teaching as hard as I can.

And who set these levels anyway? They are completely arbitrary. Do we really have any idea what a Level 1 or 3 or 5 actually means in terms of what a student can or can’t do? Do these scores tell us why these students are at these levels?

So yes, we have a bunch of data, and I’m sure we’ll meet as teachers soon to look at it. But in the meantime, I’m going to go back to my class tomorrow and continue to do what I do. I will continue to not view my students as test scores. I will continue to try to engage them with real reading and writing experiences, not test preparation. I will continue to make my year-long argument that reading and writing matter to their lives, their happiness, their success, and the health of our democracy.

I’m on my fifth set of standards. I’ve been through the Sunshine State Standards, the Sunshine State Standards 2.0, the Common Core Standards, the Florida Standards, and now the Florida BEST standards. Every time they change we are told to act and teach as though these standards are the be-all and end-all of our existence, holy writ, the only reason to spend time in a classroom. I don’t buy it. How long will it be before we have yet another set of standards and yet another standardized test to go with them?

I may be retired before that happens. But after watching the students in my FAST testing session sitting and looking numbly at the computer screens, nodding off, and forcing themselves to answer questions about texts they found boring, I can only think that the one constant in the world of standards and standardized testing is that the longer we stay on this course of constant assessment, the more students become less and less engaged in school, in literacy, and in learning